19 famous predictions that missed the mark

Bad predictions are like time capsules with punchlines. They capture smart people, confident institutions, or loud headlines misreading the future—and they do it in quotable, replayable ways. The fun is partly schadenfreude, but it’s also educational: we see the assumptions that broke, the blind spots that mattered, and the new facts that arrived to flip the script.

Rewinding those moments makes today’s certainty feel a little less, well, certain.

They also come with receipts. Newspapers mocked rockets; economists declared a “permanently high plateau”; labels passed on bands that soon ruled the charts. Each case pairs a crisp claim with an equally crisp outcome you can actually measure—ships that sank, stocks that crashed, gadgets that took over living rooms. The result is a highlight reel of human overconfidence that doubles as a study guide for humility.

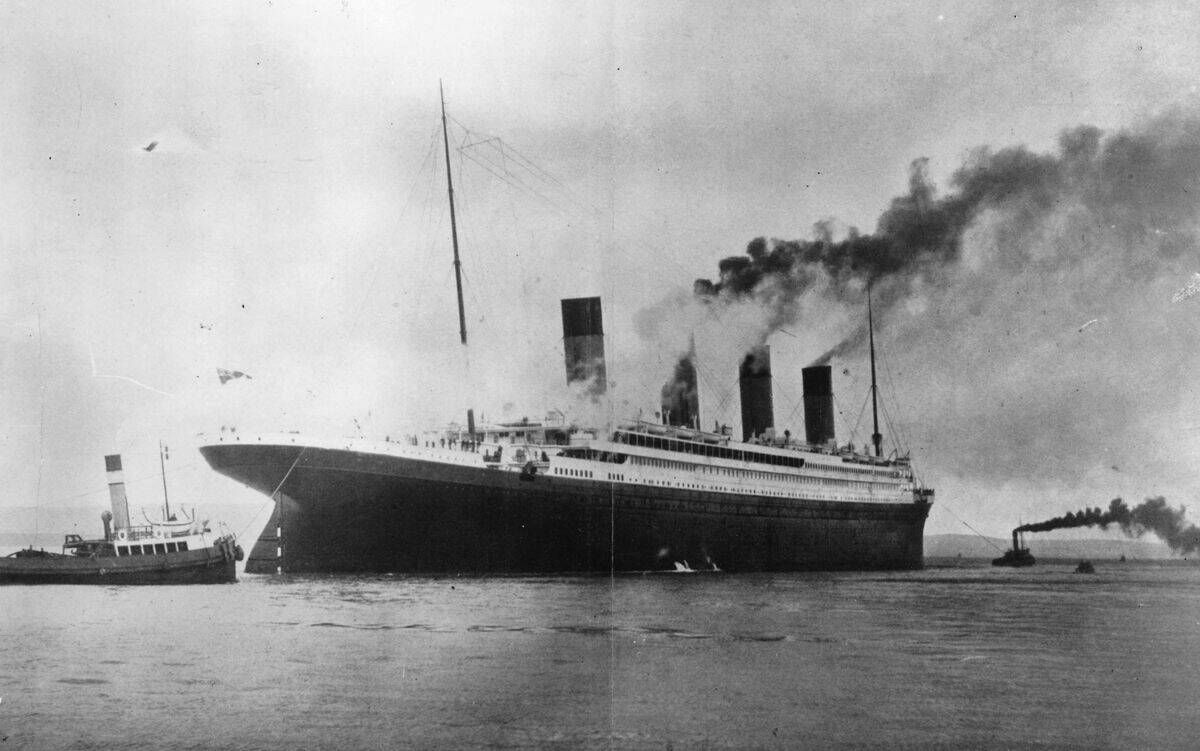

The “unsinkable” Titanic that most definitely sank

Before its 1912 voyage, the RMS Titanic was routinely described as “practically unsinkable,” thanks to watertight compartments and safety hype that echoed through Edwardian media. Yet on April 14–15, 1912, an iceberg strike led to flooding across multiple compartments, dooming the ship. About 2,224 people were aboard; around 1,500 died. Lifeboat capacity covered roughly 1,178—meeting regulations, but not reality. It’s a lesson in how technological bravado and regulatory complacency can fail catastrophically at the same time.

The aftermath rewrote rules.

The 1914 International Convention for the Safety of Life at Sea (SOLAS) mandated enough lifeboats for all, continuous radio watches, and better ice patrols. Titanic’s wreck wasn’t found until 1985 by a Franco‑American team led by Robert Ballard and Jean‑Louis Michel, resting nearly 12,500 feet down. The ship’s fate still anchors safety training, museum exhibits, and, yes, a blockbuster that ensured few will ever again call a ship unsinkable.

“Home by Christmas”: The World War I timetable that wasn’t

In 1914, politicians, newspapers, and soldiers across Europe floated a breezy forecast: the war would be short, and troops would be “home by Christmas.” Germany’s Schlieffen-derived plans envisioned a quick knockout in the West before turning East. Instead, the Western Front froze into trenches from the North Sea to Switzerland; stalemate replaced speed. The war dragged on until November 11, 1918, with casualties measured in the millions and empires—Austro‑Hungarian, Ottoman, Russian, German—either shattered or gone.

Even early surprises hinted the timeline was fantasy. The First Battle of the Marne (September 1914) halted the German advance; by December, the famous Christmas Truce saw opposing soldiers fraternize across the lines—poignant proof they weren’t going anywhere soon. Industrialized warfare—machine guns, barbed wire, artillery—overpowered mobility. The mismatch between prewar planning and technological reality turned a summer crisis into a four-year cataclysm.

A “permanently high plateau”: Wall Street’s pre-crash misread in 1929

In October 1929, famed economist Irving Fisher said stock prices had reached what looked like a “permanently high plateau.” Days later came Black Thursday (Oct. 24) and Black Tuesday (Oct. 29), kicking off the Great Crash. The Dow had peaked at 381 in early September; by July 1932 it had lost about 89% of its value from the high. The mismatch between exuberant rhetoric and market math turned the quote into the most replayed forecast miss in finance.

Context mattered but didn’t rescue it. Margin buying was rampant, industrial output was wobbling, and credit strains were tightening—facts that undercut the plateau talk. Fisher later suffered reputational damage, while John Maynard Keynes’s pragmatic “markets can stay irrational” sensibility aged better. The episode keeps economists humble and risk managers vigilant whenever someone declares a new era immune to old cycles.

Rockets can’t work in a vacuum—until they did (sorry, New York Times)

In 1920, the New York Times derided Robert Goddard’s rocketry work, suggesting rockets couldn’t operate in a vacuum—a misunderstanding of Newton’s third law and how propulsion expels mass. Goddard kept tinkering anyway, launching the first liquid-fueled rocket in 1926 in Auburn, Massachusetts. By the 1940s, the physics was undeniable; by the 1960s, it was national policy.

The Moon did not, as it turns out, require air for a rocket to push against.

On July 17, 1969—just as Apollo 11 was en route—the Times published a brief correction acknowledging rockets do work in space. That mea culpa became legend, a tidy arc from ridicule to reality. Today, we can cite thousands of successful orbital launches, reusable boosters landing themselves, and interplanetary probes—all powered by momentum conservation the editorial once waved away.

Heavier-than-air flight is impossible (the Wright brothers beg to differ)

Before 1903, notable scientists and editors doubted practical, powered, heavier‑than‑air flight. Then, on December 17, 1903, at Kitty Hawk, North Carolina, the Wright brothers flew: the first hop about 120 feet in 12 seconds, with several flights that day. Skepticism lingered until public demonstrations in 1908–1909 in France and the U.S. showed controlled turns and sustained flight. By 1911, distance and altitude records fell routinely as aviation matured out of disbelief.

What changed wasn’t physics so much as engineering. The Wrights’ three-axis control, wind-tunnel work, and efficient propellers turned theory into practice. Within a decade, aircraft were military assets; by 1919, Alcock and Brown crossed the Atlantic nonstop. In 1927, Charles Lindbergh’s solo New York–Paris flight cemented public faith. The arc from “impossible” to everyday took barely a generation.

Television is a passing fad (tell that to your streaming queue)

Skeptics sniped during TV’s early demos in the 1930s, and again after wartime delays, that people wouldn’t stare at a box for long. RCA’s splashy exhibit at the 1939 New York World’s Fair hinted otherwise. After WWII, set sales exploded; despite a 1948–1952 licensing “freeze,” U.S. adoption soared. By 1955, over half of American households had a television, transforming living rooms and advertising budgets alike.

Decades later, the box didn’t go away—it multiplied. Cable expanded choice in the 1980s–1990s; digital broadcasting followed. Streaming then rewired everything: Netflix surpassed 260 million subscribers by 2023, while YouTube built a global audience in the billions. Appointment TV ceded ground to on‑demand queues, but the central prediction flopped: screens became the default way we tell and consume stories.

“Guitar groups are on the way out”: Decca says no to the Beatles

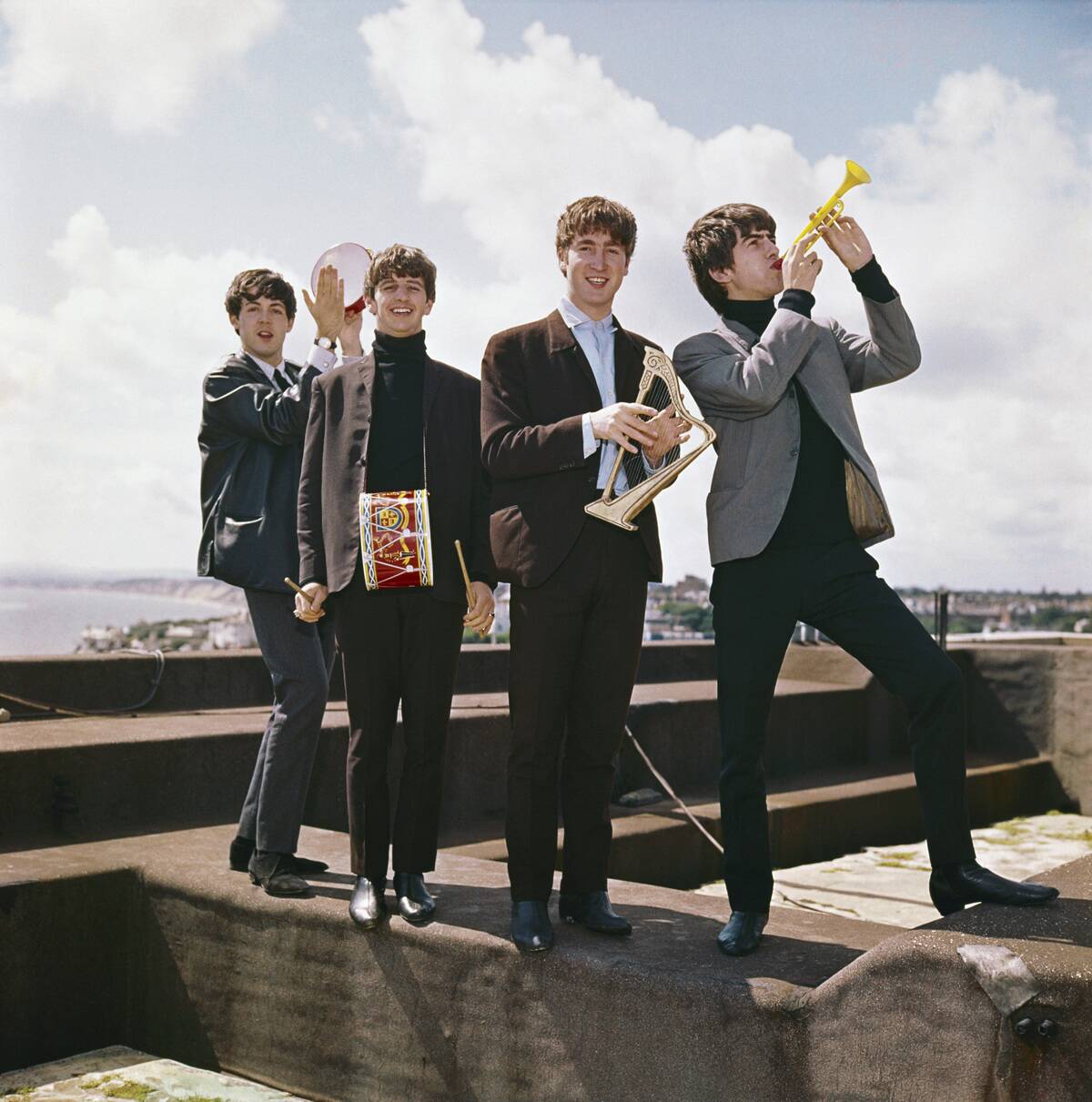

On January 1, 1962, the Beatles auditioned at Decca Studios in London, playing a slate of covers and early originals. Decca passed, reportedly favoring Brian Poole and the Tremeloes; the quip that “guitar groups are on the way out” is often cited (the exact wording is debated), but the decision isn’t. Within months, producer George Martin signed the Beatles to EMI’s Parlophone. “Love Me Do” hit the U.K. charts in October 1962.

The next two years detonated the curve. By 1964, Beatlemania stormed the U.S. with the Ed Sullivan appearance; in 1967 came Sgt. Pepper’s. Decca’s misread became a parable for A&R risk: judging a band in one chilly audition versus imagining what they could become in studios and on stages. The Tremeloes had hits; the Beatles reshaped pop.

Nobody needs a computer at home—famous last words

A 1977 remark by Digital Equipment Corporation’s Ken Olsen—“There is no reason for any individual to have a computer in his home”—is frequently quoted without context. He later said he meant centralized home control, not personal computing. Either way, the market answered fast. The Apple II (1977), Commodore 64 (1982), and IBM PC (1981) pushed computers onto desks and into dens, turning beeps and BASIC into family soundtracks.

By 2000, U.S. Census data showed over half of American households had a computer; by the 2010s, laptops and tablets were standard. The home computer morphed into the smartphone, putting CPUs in pockets and assistants in speakers. The prediction flopped not because homes needed mainframes, but because microprocessors, friendly software, and the internet made computers personal—and irresistible.

The iPhone will flop: A confident call that aged like milk

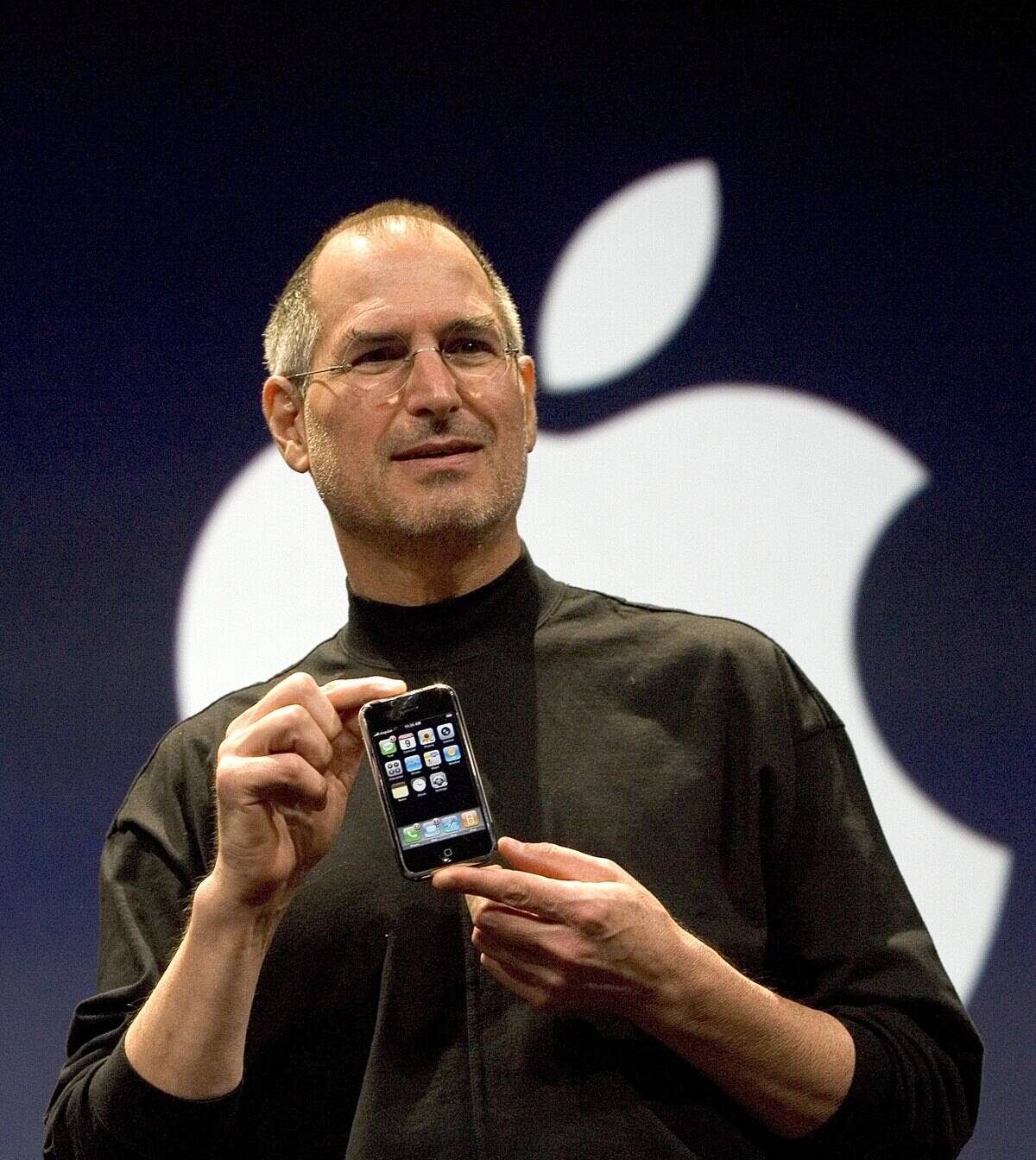

When Apple unveiled the iPhone in January 2007, skeptics pounced. Microsoft’s Steve Ballmer laughed at the $500 price and lack of a physical keyboard, predicting poor business appeal. Others doubted carriers would play ball or that touchscreens could beat BlackBerry. Yet Apple sold about 1 million units in the first 74 days and roughly 6 million of the original iPhone before the 2008 refresh.

Then came the App Store (2008), 3G, and a flywheel. By 2016, Apple had sold over 1 billion iPhones cumulatively; the device became a revenue anchor and a design template the entire industry followed. Today’s phones are basically iPhone descendants: big glass, multi‑touch, app ecosystems. The “flop” forecast now reads like an artifact from the QWERTY era.

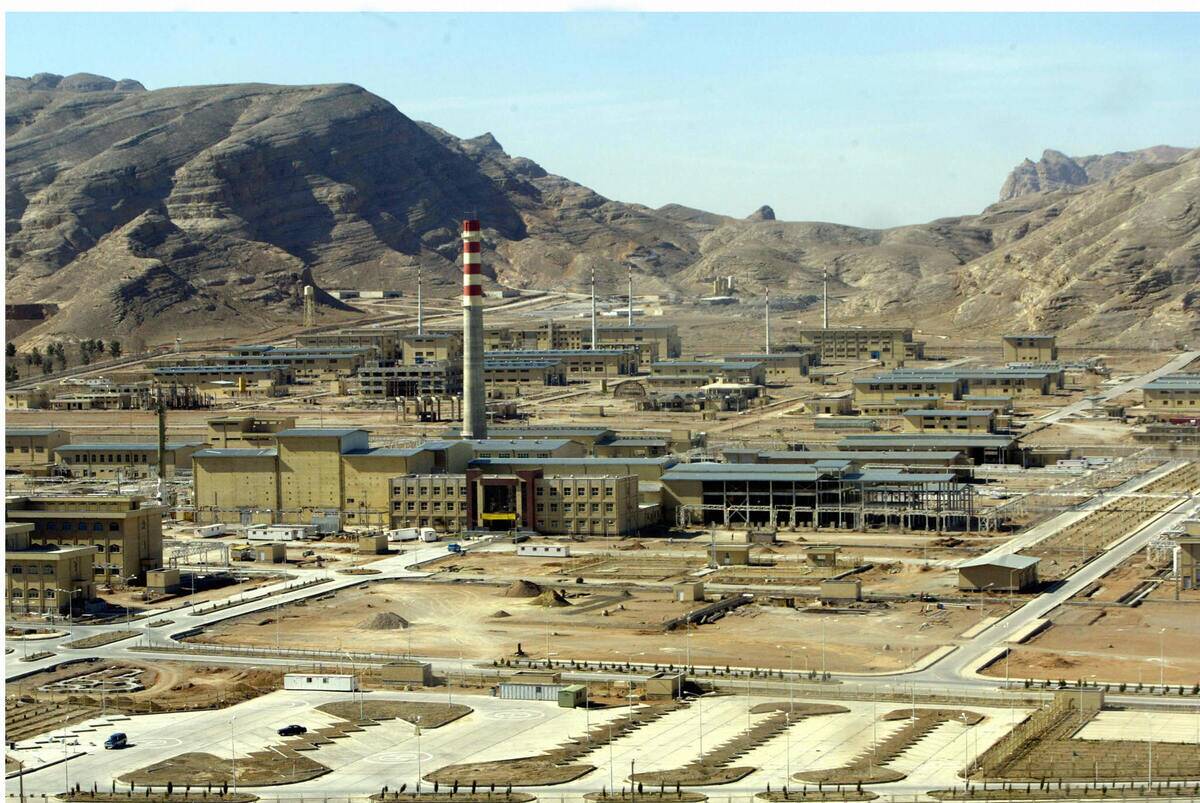

Nuclear power will be “too cheap to meter”: Not quite

In 1954, Atomic Energy Commission chair Lewis Strauss enthused that future electricity would be “too cheap to meter,” a line often linked to nuclear. Historians note he likely had fusion dreams in mind; the phrase nonetheless stuck to fission policy. Reality brought high capital costs, long construction timelines, and strict safety regimes. U.S. nuclear plants generate roughly 18–20% of electricity most years—but the bills definitely have meters.

Recent headlines underline the gap. The Vogtle 3 and 4 reactors in Georgia entered commercial operation in 2023 and 2024 after years of delays, with total costs exceeding $30 billion. Meanwhile, some countries have retired older reactors while others extend lifetimes. Advanced designs and small modular reactors may shift costs someday, but “nearly free” power hasn’t arrived.

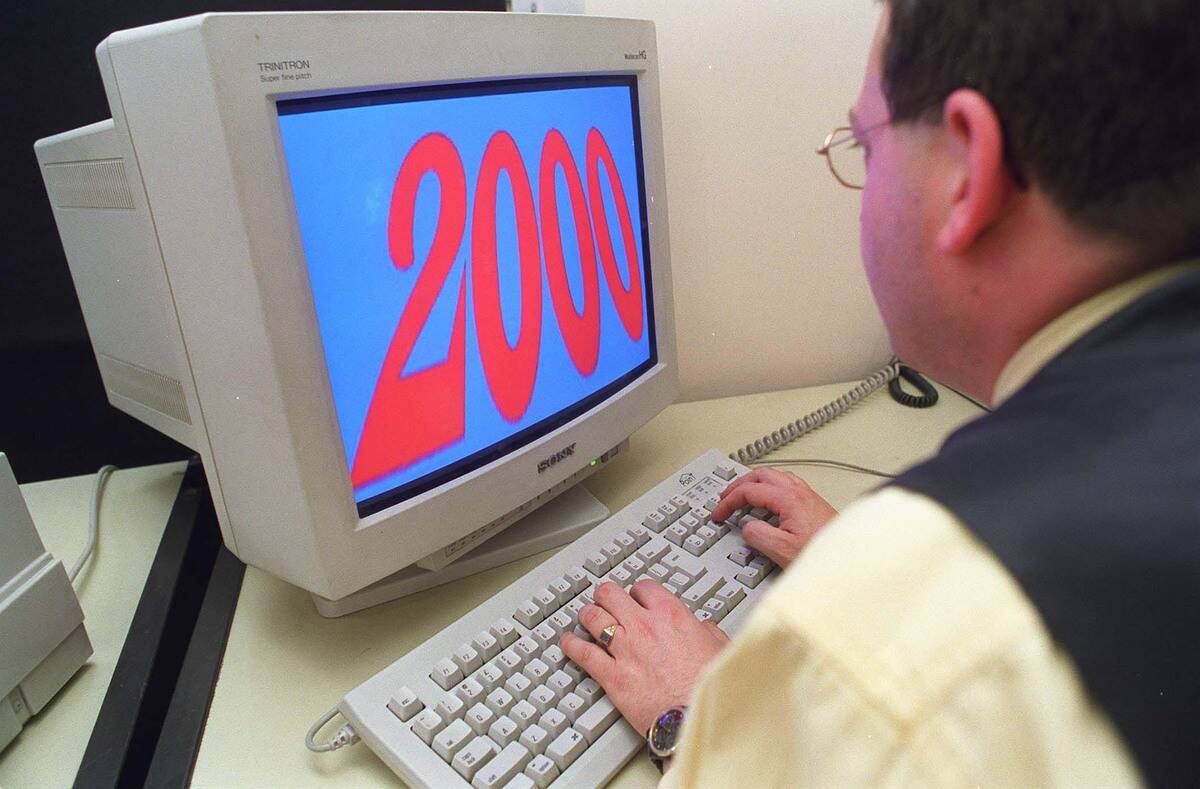

Y2K will end the world (spoiler: it didn’t)

The fear was simple: two‑digit years would roll from 99 to 00 on January 1, 2000, confusing computers into thinking it was 1900. Some predicted planes would fall and power grids would darken. Instead, after massive remediation—Gartner estimated global costs in the hundreds of billions of dollars—midnight passed with more hiccups than disasters. Many U.S. agencies had spent years testing, and airlines kept flying.

There were glitches: some parking meters, credit card terminals, and data systems burped dates; nothing civilization‑ending. Critics later said the scare was overblown; engineers countered that fixes worked precisely because the threat was taken seriously. It’s a rare forecast failure where the punchline is competence quietly doing its job.

The 2012 Mayan apocalypse that stood us up

Remember when December 21, 2012 was supposed to be curtains? Pop culture mashed up Mayan Long Count calendars with doomsday fever, mistaking the end of the 13th baktun for the end of everything. Scholars pointed out the calendar cycles; endings imply new counts, not cosmic shutdowns. NASA even posted a FAQ debunking rogue planets and solar flares queued to ruin the day.

The date arrived, people commuted, and the most disruptive event was probably a movie reference. Archaeology got a boost, though: public interest in Mesoamerican timekeeping surged, and museums used the moment to explain how the Long Count spans roughly 5,125 years from a mythic start in 3114 BCE. Apocalypse fatigue, meet calendar literacy.

Halley’s Comet will poison the planet (1910’s sky-high panic)

When Halley’s Comet returned in 1910, spectroscopes detected cyanogen gas in its tail. French astronomer Camille Flammarion speculated it might “impregnate” Earth’s atmosphere as our planet passed through the tail on May 19. Panic merchants sold “comet pills” and gas masks; newspapers obligingly stoked the mood. The science was clear, though: the gas density was far too low to matter.

Earth sailed through fine. Halley’s roughly 75‑year period made it a repeat visitor; its 1986 return met cameras, not chemophobia. The 1910 scare now reads like an early case study in how a sensational hypothesis plus new tech (spectroscopy) can outrun sober risk assessment—a template we still see whenever fresh data meets old fears.

The “everything’s already been invented” patent-office line (the myth that won’t die)

You’ve probably seen the 1899 quote credited to U.S. Patent Commissioner Charles H. Duell: “Everything that can be invented has been invented.” It’s almost certainly apocryphal. Duell actually praised American ingenuity in official reports and supported expanding the system. The myth persists because it’s perfect for dunking on short‑sightedness, but as history, it’s more urban legend than lesson.

The real record contradicts the quip. Patent volumes surged in the early 20th century, covering automobiles, aviation, and electrification. Duell’s office kept granting them, helping codify the very boom the fake quote pretends he missed. It’s a reminder to check sources—especially when a line seems too perfectly wrong.

Only five computers will ever be needed (another zombie quote)

This chestnut is often pinned on IBM’s Thomas J. Watson Sr. circa the 1940s, but researchers have never found a primary source. IBM has disavowed it for decades. The myth survives because early computers were room‑sized and rare, so it feels plausible someone said it. Meanwhile, the market sprinted past the premise while the quote wandered through presentation slide decks.

Facts moved fast: UNIVAC delivered a commercial computer in 1951; by the late 1950s, dozens of mainframes were in government, academia, and industry. The integrated circuit (1958–1959) and then the microprocessor (1971) collapsed costs and size. Today, billions of microcomputers live in phones, cars, and appliances—more than five, give or take.

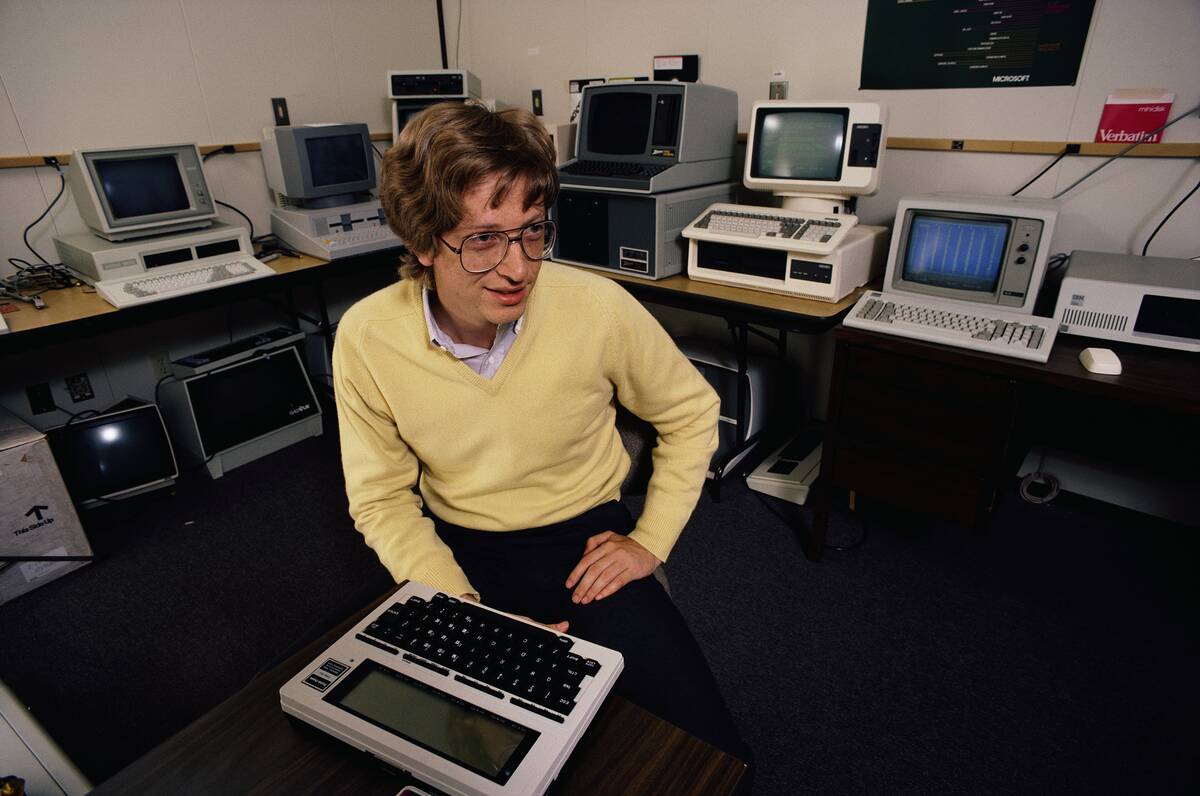

“640K ought to be enough for anybody” (the classic, dubiously sourced groaner)

Bill Gates has repeatedly said he never uttered the line about 640K of memory being enough, and no contemporaneous record proves he did. The number itself came from the IBM PC’s 1981 architecture: a 1MB address space with 640KB of conventional memory and 384KB reserved for system components. Constraints were real; the quote likely isn’t.

Developers worked miracles inside that box—think games, spreadsheets, word processors—until protected mode, 32‑bit computing, and then 64‑bit systems blew the ceiling off. The joke survives because it neatly skewers short‑term thinking, but as history it belongs with the other tech quotes that sound right and cite wrong.

Radio, telephones, and movies? Early critics who called them fads

Skepticism greeted all three. Bell’s telephone patent (1876) didn’t instantly win believers; Western Union declined to buy it, only to build its own competing network later. Radio’s first commercial broadcast—KDKA’s election returns on November 2, 1920—seemed like a novelty until sets flooded homes. By 1930, U.S. household radio ownership was widespread, changing news, music, and advertising.

Movies evolved even faster. The Jazz Singer (1927) made talkies inevitable; by the early 1930s, silent films were done in mainstream theaters. Each medium drew “passing fad” barbs because early content looked crude or coverage was sparse. Scale and storytelling fixed that, and soon they weren’t novelties—they were culture.

By 2000 we’ll all have flying cars (and robot maids), right?

Mid‑century magazines and TV promised skyways and domestic droids. The Jetsons (1962) set its future in 2062, but popular memory pulled it closer. Inventors tried: Moller’s Skycar prototypes never certified; Terrafugia’s Transition inched forward; PAL‑V and Klein Vision logged test flights. As of the 2020s, eVTOL companies like Joby and Archer are testing air taxis, but mass personal flying cars remain rare and heavily regulated.

Robot maids went sideways into robot vacuums.

iRobot launched Roomba in 2002; tens of millions of units later, floor cleaning is autonomous, but dinner isn’t. Smart speakers can set timers; dishwashers still need loading. The dream split: aviation is edging toward short‑hop services, and household robotics picked the low‑hanging chores.

AI and the forever-twenty-years-away prediction problem

Optimism about AI has cycled for decades. In 1965, Herbert A. Simon predicted that machines would be capable “within twenty years” of doing any work a human can do. The mid-1970s and late 1980s saw AI winters, when progress and funding cooled. Yet breakthroughs arrived: backpropagation revived neural networks in the 1980s; deep learning dominated ImageNet in 2012; and AlphaGo defeated a world champion in 2016.

Generative AI models then leapt to prominence, with large language models and diffusion systems producing text, code, and images at scale by 2022–2023. Forecasts about “AGI in X years” continue to scatter widely. The safe takeaway from this hall of wrong calls: treat timelines cautiously, track capabilities with data, and expect surprises—just not on your schedule.